davejonescue

Puritan Board Junior

Disregard post. I thought I had a way to use Word macros to complete this task with help. But a single EEBO-TCP doc running it made it crash, lol. I can dream though, I can dream.

Last edited:

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

I dont know if it is my computer or just the sheer amount of misspelled words in older Puritan documents, but I did find a macro that would tranfer all misspelled words to another document. Doing thus would theoretically allow one to make "master list" of potential misspelled words, which then one should be able to run a macro correcting them in every Puritan document. But, it kept crashing on me. If the Lord ever blesses me financially, I think I would pay to have a software created for this specific purpose; that would be to upload a document and auto-convert these texts to proper spelling. But he hasnt, so thus it is on the shelf as of right now.Oo!

Oh...

Honestly this is already an amazing resource. But some day this has got to work!

True, but that is kind of the vision I had with the "master dictionary" or DOCX with all potential word misspellings and variations, which would show as misspellings. Personally, I am not adverse to thees and thous, nor archaic wording, as long as those words are spelled correctly. And to be honest with you, I can read 99.9% of EEBO-TCP text fine, and I honestly think many people can as well. But, it would go a long way in furthering peoples preconceived apprehensions if the barrier of grammatical discrepancies were removed. Maybe one day the Lord will put it on the heart of a software engineer to do something like this, but until then, I am for ever indebted to God, and EEBO-TCP for making what they have made available free of charge.That would have been very cool. There is not just the irregular spellings but also variability between spellings. The txt files I've used from archive.org are very messy from that point of view and need a lot of manual work.

I would really like to talk to you more about this, and see if there is a way I could possibly license the software from you? With this macro, the potential is to be able to create a "master list" of all known misspells in all of the known EEBO-TCP Puritan documents by running them all through the macro, and disposing of duplicate misspellings. If it is possible to add that list to your software, without you having to reprogram anything, this could be groundbreaking. Because once that software plus the master list is created, it could not only be used for EEBO-TCP literature, but possibly as the key to collect an entire Puritan corpus by hunting down all known text, and using the same method EEBO-TCP did to OCR their documents (which I want to say is listed open-source online) then run them through "the key," and potentially; now I know this is dreaming, have a correct current spelling of all known Puritan works.I made software that does this a couple years ago and I made a basic list of words that should be respelled with a few thousand entries. Even so, any book passed through the software has to be checked by a human reviewer, because, for various reasons, the software cannot perform with 100% accuracy. It is much faster than retyping though.

Oh, and then it would be fairly straight-forward to write some additional code to compile a list of misspelled words and eliminate duplicates. Then search and replace through all the html files which have been locally stored.

I might build a dictionary of sorts: moste = most, selues = selves, etc and then apply that dictionary to each file in sequence. I anticipate that an approach like this could process all the files in a day.

I will warn though that such a thing would be prone to mistakes because there was no standardized spelling and printers often varied the spelling of words (or the incorporation of shortcuts) even on the same page. So I suspect you will have multiple words with the same spelling and correcting one will make the other worse. But you might get 90% of the way there with an approach like this.

You do realize that EEBO's goal was not complete error free texts but reasonably complete? So you see <o> or some other symbol a lot where text (Greek, Hebrew, Latin) was omitted. And speaking from experience, each file is only as good as that typist and the condition of the page images. EEBO typists make mistakes as well leaving out words and text between similar words (type thereupon, look up and see thereupon on the next line and continue not knowing text was skipped; common mistake and there's a Greek word for it). You'll need to make a huge proviso on any end product so folks know these are still uncorrected texts as far as any mistakes and omissions by EEB. The only way to find and correct those is compare each text with a good original of the edition or the facsimile used by EEBO.I would really like to talk to you more about this, and see if there is a way I could possibly license the software from you? With this macro, the potential is to be able to create a "master list" of all known misspells in all of the known EEBO-TCP Puritan documents by running them all through the macro, and disposing of duplicate misspellings. If it is possible to add that list to your software, without you having to reprogram anything, this could be groundbreaking. Because once that software plus the master list is created, it could not only be used for EEBO-TCP literature, but possibly as the key to collect an entire Puritan corpus by hunting down all known text, and using the same method EEBO-TCP did to OCR their documents (which I want to say is listed open-source online) then run them through "the key," and potentially; now I know this is dreaming, have a correct current spelling of all known Puritan works.

Of course, I know there is another way to do it besides creating a "master list." That is if the software you created has an option to "add new list" of misspelled words, then the docs could singly be run through the macro, create a list of misspelled words for that specific doc, put into your program, and re-edited individually. My goal would still be the same though, and that would be to put them into the public domain for universal access. But, this would also allow other people to pick up the helm and "tidy up" the works for e-publishing, because replacing the spelling is the biggest time consumer. Next would be figuring out incomplete words (which only constitute a fraction of the texts in their entirety.)

I am very serious about this though. My email is [email protected] if you would like to talk privately; but I am willing to pay (if it is within my means) to use it. I am going to go to bed for now, got to get up for work in a few. But this is very exciting. Thank you so much for reaching out, and hope to hear from you soon. God Bless.

edit*** And really just to reiterate; this dream is only for the good of the Church. I envision a website where people can come to, and be able to download a PDF/EPUB of the entire known Puritan Corpus for free. And with tools like DeepL, quite possibly in multiple languages such as French, Spanish, Chinese, Dutch, German; and the list goes on. Of all the docs I have, all 4,200 or so in DOC format, that is only 625mb. Hosting the entire corpus should not be hard, even on something like an $8.99 annual Google sites.

Proof of concept:

First a spreads

Logan, are the notes, what were originally marginal notes in EEBO texts, embedded in html, and can they be pulled out with a script to automatically make Word footnotes?

Chris, perhaps an option for your base text would be to output pure text (.txt) file, but wherever it runs into a footnote put in a couple of empty lines, insert the word "footnote", add in the footnote text, then a couple more empty lines, and continue the text. Something like this from Bownd:

View attachment 9570

But yes, it looks like the footnotes are links to separate pages so you'd just have to follow that link (like I'm already doing to grab the main text) and grab the footnote. Should definitely be possible. Going to Word from that is going to be trickier but that might be possible too.

Thank you so much for your help, this is truly a blessing. I will get working on that spreadsheet beginning today. I think I have a way to handle the multiple text spelling issue, that is, if we can keep all the words that were replaced, look over them and see which words are duplicated, we can then go into a program like Word (sorry I'm not stuck to Word its just all I know) and auto find-highlight those words, and correct them in context. But again, thank you so much, and I will let you know when I have that spreadsheet done.Proof of concept:

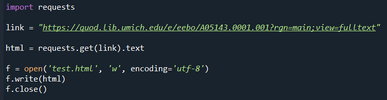

First a spreadsheet containing a list of files to be processed, presumably pretty easy to generate since you have that information in your "creator" column. The fulltext URL can probably be generated automatically from the URL you list in your database.

View attachment 9562

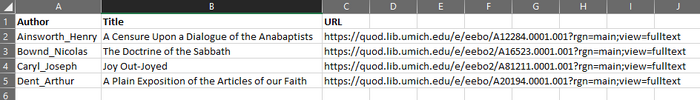

Another spreadsheet with a list of dictionary items to replace:

View attachment 9563

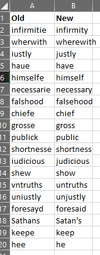

The code to iterate over it all:

View attachment 9568

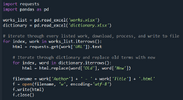

The output:

View attachment 9565

Old:

View attachment 9566

New:

View attachment 9567

The above four works took about 16 seconds total to download, process, replace archaic words, and write to files on my 7-year old machine. The time will obviously go up the more words there are to find and replace but I think this is a good proof of concept.

I'd be happy to help but first thing I need is some kind of speadsheet or table with author name, title, and URL. Doing the word replacement could be done at a later point in time.

Is that code in a jupyter notebook?Proof of concept:

First a spreadsheet containing a list of files to be processed, presumably pretty easy to generate since you have that information in your "creator" column. The fulltext URL can probably be generated automatically from the URL you list in your database.

View attachment 9562

Another spreadsheet with a list of dictionary items to replace:

View attachment 9563

The code to iterate over it all:

View attachment 9568

The output:

View attachment 9565

Old:

View attachment 9566

New:

View attachment 9567

The above four works took about 16 seconds total to download, process, replace archaic words, and write to files on my 7-year old machine. The time will obviously go up the more words there are to find and replace but I think this is a good proof of concept.

I'd be happy to help but first thing I need is some kind of speadsheet or table with author name, title, and URL. Doing the word replacement could be done at a later point in time.

Yes sir, I understand this; but my intent for this is not really specifically for scholars; or, crowds where perfection is necessary. I do not mind the Greek, Hebrew, not being included; or the Latin not being translated. The goal is more devotional than it is scholarly; and truthfully, the intended audience are for those who are probably not fluent in Greek or Hebrew to begin with like a large majority of the church. Putting a "warning" is no problem. My only goal is to correct the spelling of the EEBO-TCP text for now, and put them in the public domain, as well as creating Epubs/PDFs. In the end, it is one huge step to getting the Puritan Corpus into current English. But I definitely see your point.You do realize that EEBO's goal was not complete error free texts but reasonably complete? So you see <o> or some other symbol a lot where text (Greek, Hebrew, Latin) was omitted. And speaking from experience, each file is only as good as that typist and the condition of the page images. EEBO typists make mistakes as well leaving out words and text between similar words (type thereupon, look up and see thereupon on the next line and continue not knowing text was skipped; common mistake and there's a Greek word for it). You'll need to make a huge proviso on any end product so folks know these are still uncorrected texts as far as any mistakes and omissions by EEB. The only way to find and correct those is compare each text with a good original of the edition or the facsimile used by EEBO.

Is that code in a jupyter notebook?

Yes, this is awesome. And I was just thinking, that with Excel, I am pretty sure you can auto-alphabetize. So, it should be pretty straight forward to do that, see the words that might get jumbled in correction, take them out of the dictionary, and leave them for edit within word so they can be corrected in context. This is awesome. Thank you so much for your contribution!!I did this as well. Just checked my word swap list, and I have about 7000 words so far.

I bet if we compiled our lists, it would be pretty big. If anyone wants to do this, let me know.

Yes, this is awesome. And I was just thinking, that with Excel, I am pretty sure you can auto-alphabetize. So, it should be pretty straight forward to do that, see the words that might get jumbled in correction, take them out of the dictionary, and leave them for edit within word so they can be corrected in context. This is awesome. Thank you so much for your contribution!!

@NaphtaliPress I can extract the margin notes pretty easily from the Oxford page. The other one not so much because it requires following and gathering data from over 2000 links.

Unfortunately it looks like footnotes in Word is very manual process. So I've generated a word document for you that simply breaks out those marginal notes in a very obvious way:

Before:

View attachment 9572

After:

View attachment 9571

The Word document is in the attached zip file, if you find that useful. Seems like it would be at least a good start for copying/pasting.

Let me know if there is something else that might help. I'm sure you're much rather your time be spent doing something more productive than a repetitive formatting or parsing. Do you do all the footnotes in Word and then import a Word document into InDesign?Thanks Logan; really appreciate it. Still will be a slog but that sort of processing will help I think.

No sir, I am not worried about the footnotes. As I was creating my index, I simply went to "full text" copied all, and pasted in a Word doc. I completely disregarded the footnotes, in fact, I think I got rid of them all by deleting all hyperlinks which did away with the "page numbers" in between the text, and the footnote links within the text. Not having footnotes doesn't bother me at all.When you created your Word files, did you worry about the footnotes? Since EEBO seems to have them all as links to separate pages, this could be problematic, not just for speed but if they start blocking hundreds of thousands of web-page requests from the same IP.

If you're just concerned about the base text, that would make this a whole lot simpler.

When you created your Word files, did you worry about the footnotes? Since EEBO seems to have them all as links to separate pages, this could be problematic, not just for speed but if they start blocking hundreds of thousands of web-page requests from the same IP.

If you're just concerned about the base text, that would make this a whole lot simpler.

Yes sir, I understand this; but my intent for this is not really specifically for scholars; or, crowds where perfection is necessary. I do not mind the Greek, Hebrew, not being included; or the Latin not being translated. The goal is more devotional than it is scholarly; and truthfully, the intended audience are for those who are probably not fluent in Greek or Hebrew to begin with like a large majority of the church. Putting a "warning" is no problem. My only goal is to correct the spelling of the EEBO-TCP text for now, and put them in the public domain, as well as creating Epubs/PDFs. In the end, it is one huge step to getting the Puritan Corpus into current English. But I definitely see your point.